Deterministic scrubber

Regex and dictionaries for NIF/DNI, IBAN, case numbers, court references and other patterns you can explain to a regulator.

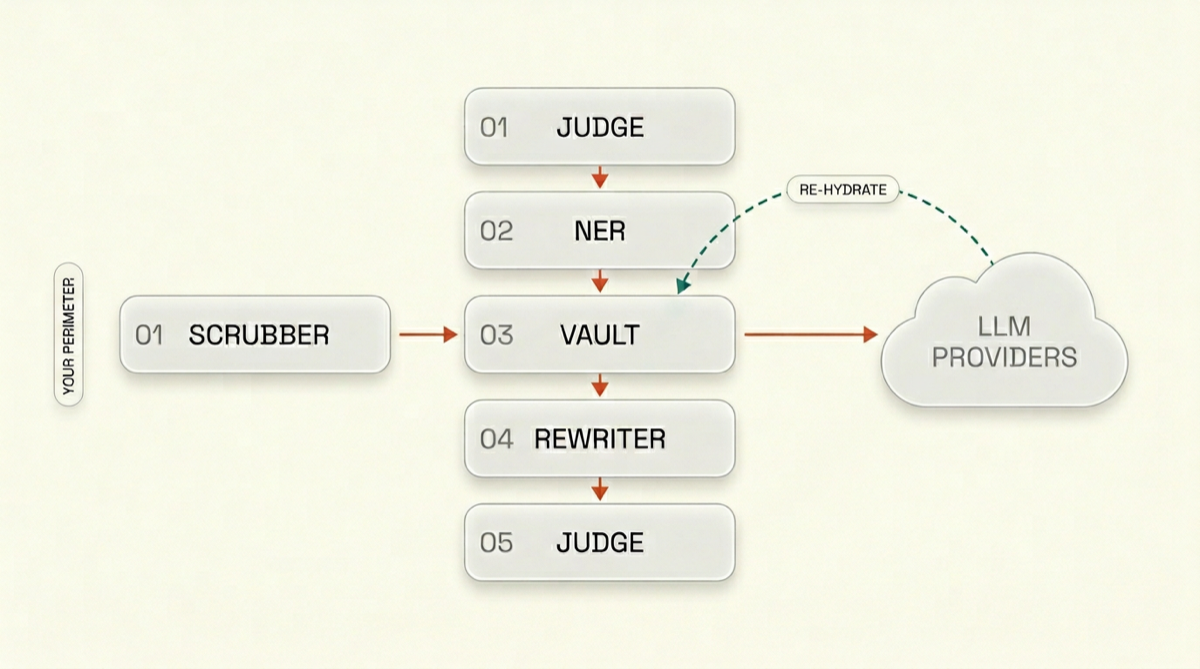

Route any prompt to any model — OpenAI, Anthropic, Mistral, Gemini or your stack — with a local pipeline that strips, masks and re-hydrates personal data. The upstream model sees pseudonyms; your firm sees the real names.

OpenAI-compatible gateway · API and UI

What leaves your perimeter

████████ ████ advised ██████████ on the █████ merger.

What the model sees

Person_12 and Org_4 advised Client_A on the Northern deal.

What your team sees back

Maria García and Cuatrecasas advised Acme Corp on the Nordic merger.

Built for regulated work

Deterministic scrubbing first, then models, then an adversarial judge. Answers come back through the same vault so names and entities reappear only for you.

Local execution · Tunable strictness · Audit trail

Deterministic scrubber

Discriminative NER

Reversible vault

SLM rewriter

Adversarial judge

Your LLM provider

Deterministic scrubber

Discriminative NER

Reversible vault

SLM rewriter

Adversarial judge

Your LLM provider

Re-hydrate response· Deterministic per-session tokens: the same person stays Person_12 across turns. The vault re-hydrates the model reply before anyone reads it.

Each stage has a job. Together they keep quasi-identifiers and edge cases from slipping through.

Regex and dictionaries for NIF/DNI, IBAN, case numbers, court references and other patterns you can explain to a regulator.

Fine-tuned encoder models spot people, organizations, locations and legal entities where rules alone miss context.

Deterministic per-session tokens: the same person stays Person_12 across turns. The vault re-hydrates the model reply before anyone reads it.

A small model tuned with RL handles indirect identifiers and phrasing that still points to a single individual.

A second small model attacks the masked text for re-identification risk and scores k-anonymity / l-diversity style leakage before anything is sent upstream.

Tune strictness per matter, client or API key — not by retraining. Legal sets the policy; engineering ships the binary.

Example policy surface

Same gateway, multiple backends. Swap providers without changing your anonymization story.

From litigation to public-sector disclosure — any workflow where a name in the wrong place is the whole problem.

Draft and research with LLMs without client names or opponent details crossing your perimeter.

Generate summaries and extracts for data subjects without leaking other individuals in the same file set.

Let deal teams use frontier models on counterparty and employee data under a controlled pseudonym layer.

Summarize and structure clinical text for operations while PHI stays tokenized for external models.

Run LLM-assisted review on transactions and customer files with account tokens instead of live identifiers.

Prepare releases and internal briefings with consistent redaction before any cloud model sees the text.

Alpha access, implementation partners and design reviews. Tell us who you are and what you want to run through Veil.

Open core first. Hosted and enterprise when you need someone else to run the judge models and SLAs.

Free

Self-host the gateway, models and policies. Full source for the pipeline and UI.

From €299 / month

Managed multi-tenant gateway with upgrades, monitoring and predictable scale.

Custom

Private cloud, BYOK, on-prem judge and rewriter SLMs, custom policies and contractual terms.

We are onboarding a small set of firms and platform teams for the alpha.